Cockpit Intelligence

The operator's complete picture.

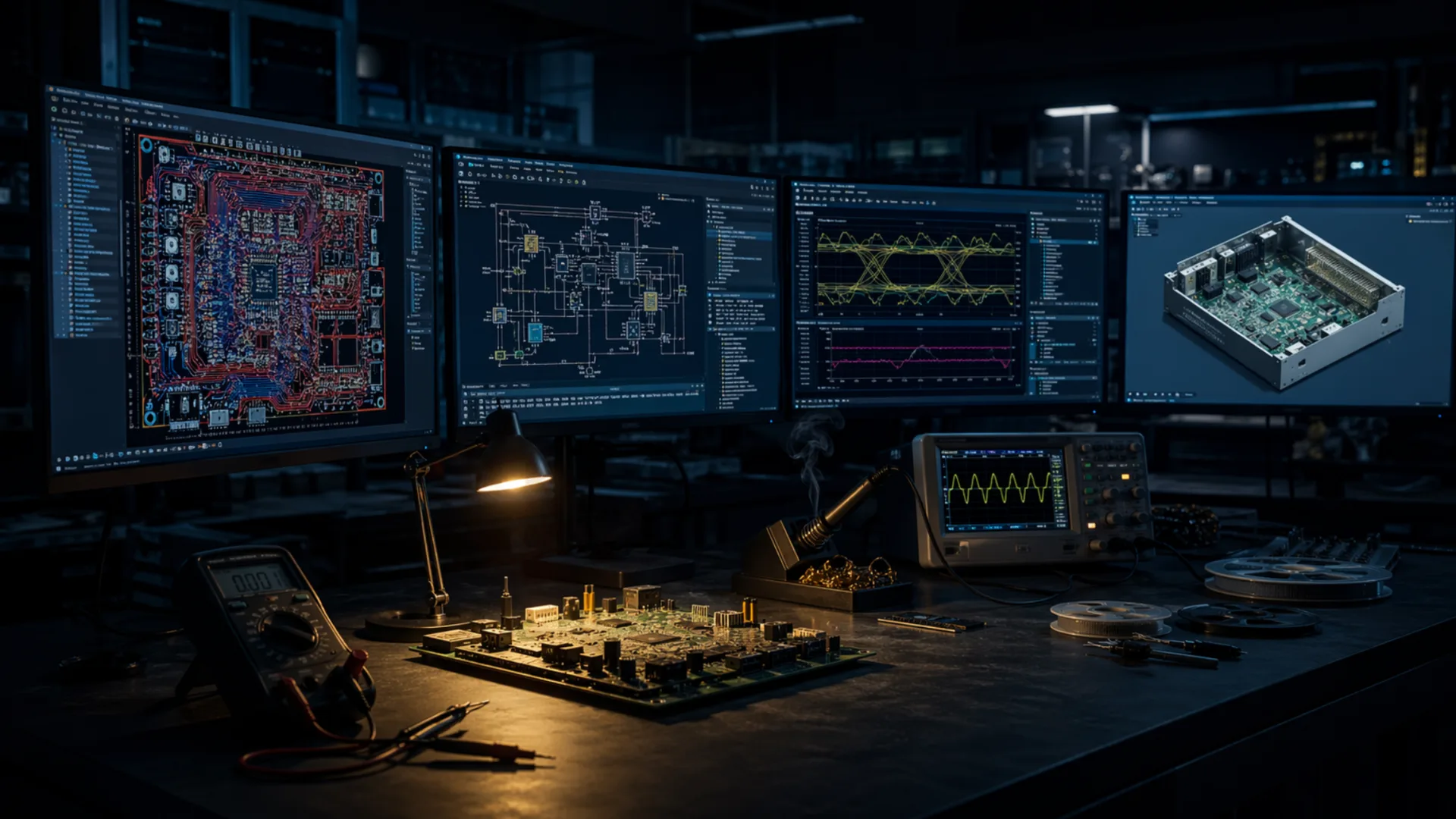

Every autonomous journey begins with the operator. Before any vehicle can make decisions independently, the human in the cab needs complete situational awareness — vehicle systems, sensor status, navigation, and field conditions unified in a single ruggedized interface.

NyxCore, running on NXP i.MX8 silicon, is the compute foundation of Layer 01. BAQEN, Tarhund's ruggedized vehicle command terminal, is the interface layer — a low-latency touchscreen display that integrates navigation, vehicle subsystem status, live sensor feeds, and operational data into one field-ready screen.

- Real-time navigation and field mapping

- Vehicle subsystem status and health monitoring

- Live sensor data visualization

- Camera feed integration

- CAN / CAN-FD, Ethernet and wireless connectivity

- Containerized software stack — adaptable to any vehicle architecture